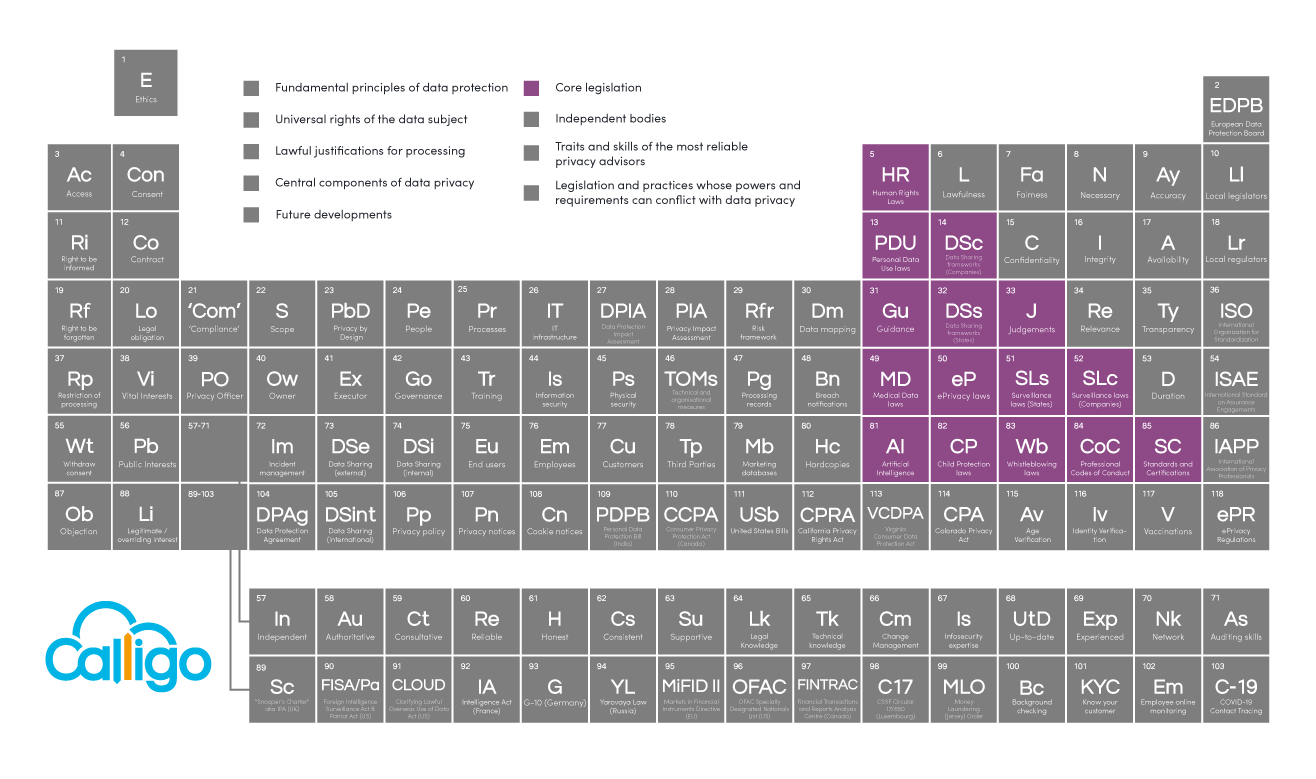

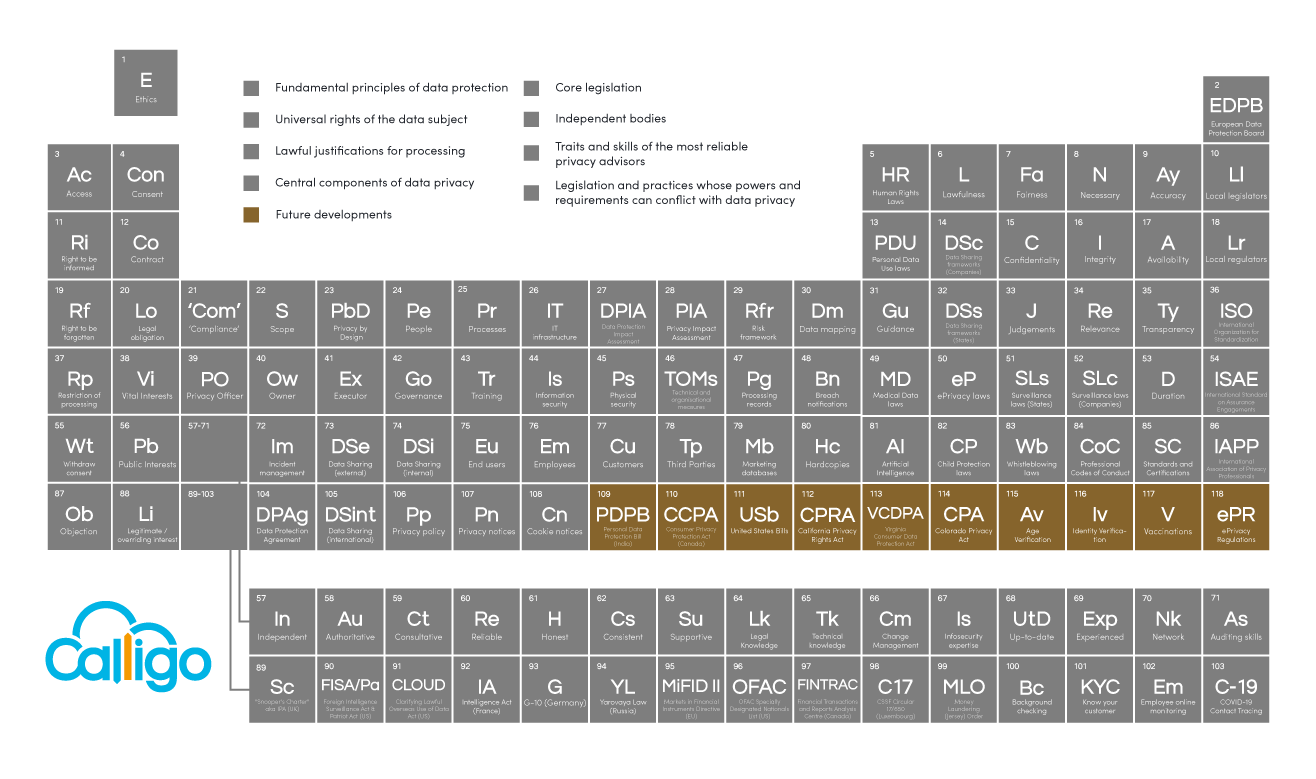

The Data Privacy Periodic Table – our most significant update yet

Since our last update in January, there has been an unprecedented amount of activity in the data privacy world. And yes, we probably do say that every time!

New laws have passed in Virginia and Colorado. The UK’s post-Brexit EU adequacy was confirmed. Plus of course, the EU’s significant changes to Standard Contractual Clauses and the reawakening of the debates over Identity Verification, especially in the context of social media.

These industry landmarks and others, plus the way that data privacy has become – quite rightly – a fundamental part of topical conversations surrounding vaccinations and identity, all combine to require us to make the most substantial changes to the Periodic Table of Data Privacy yet.

See below what we have changed and what updates we have made.

Remember: this is an open project, contributed to by the entire industry. We encourage input from you all, and receive dozens of suggestions between each update.

If you have any comments or want to discuss in more detail, you can contact me here.

Restructuring the Core Legislation section

There are now too many laws being passed and bills being introduced to name them all individually in the 15-element Core Legislation section. We have therefore restructured this area to show the 15 categories of data privacy legislation, standards and case law that prescribe how data privacy rights are protected in practice.

Human Rights Laws

The protection and sanctity of a person’s complete privacy (i.e. not just in reference to their data) is enshrined in Article 12 of the UN’s Universal Deceleration of Human Rights from 1948, from which most other human rights legal frameworks then derive.

No one shall be subjected to arbitrary interference with his privacy, family, home or correspondence, nor to attacks upon his honour and reputation. Everyone has the right to the protection of the law against such interference or attacks.

Article 12 of the UN’s Universal Deceleration of Human Rights 1948

Everyone has the right to respect for his private and family life, his home and his correspondence.

Article 8 of the European Convention on Human Rights 1950

Personal Data Use Laws

This is the category into which most data privacy laws fall. These are laws and regulations that govern how data subjects’ data can be used by governments and organizations, such as the EU’s GDPR and Brazil’s LGPD/GDPL.

Data Sharing Frameworks (Companies)

These are the mechanisms through which personal data can safely cross borders. These include international agreements – for example, the EU’s adequacy agreements – that recognise each other’s data privacy regimes as sufficiently robust such that no additional safeguards are legally required for relevant citizens’ data to be shared.

This element also covers Standard Contractual Clauses (SCCs) aka Model Clauses – the template legal clauses that the EU has provided for companies to include in their data sharing agreements with third parties and even between related corporate entities whenever EU personal data leaves the EU.

A note on the UK’s Adequacy ruling

On 28th June 2021, the UK was awarded adequacy by the EU, approximately six months post-Brexit and just two days before the expiration of the ‘interim period’ that maintained the status quo until adequacy was awarded.

This is record-breaking time (far surpassing Argentina’s previous 18 months), but with speed came limitations. Of course, the UK’s prior membership of the EU and enactment of the GDPR into law via the Data Protection Act 2018 helped secure adequacy. But regardless, there is an unprecedented ‘sunset clause’ that states the EU will monitor the data privacy situation in the UK until 2025, and can revoke adequacy at any time if the UK deviates from its current position.

This will not be taken lightly by the UK. Its stated intention is to become a ‘centre of excellence’ for the development and use of AI in industry, especially in FinTech. And as is often mentioned, AI and data privacy are often in conflict. We have already seen that Brazil’s laws on biometrics are diametrically opposed to France’s. Meanwhile, UEFA and the UK Police are pro-facial recognition to prevent football hooliganism, while privacy advocates are not. The UK may have to become a leader not just in AI, but in Ethical AI in order to preserve its adequacy ruling.

A note on the recent Standard Contractual Clause (SCC) developments

SCCs have come under extended attention recently as in June 2021, the EU released its new versions, made necessary after the Schrems II judgement made it clear that their previous format was not suitable – not least because they only covered data being moved from an EU Controller to non-EU entity, not from an EU Processor.

The new SCCs cover all sources and directions of data movement, and also include a set of technological and organisational requirements that both parties must adhere to (such as requiring data encryption), ensuring they are less a legal tickbox exercise, and instead create actual practical privacy safeguards.

It is worth noting that the old SCCs are not dead. Not only do they remain valid for new contracts until 27th September 2021, but companies also have until 27th December 2022 to replace them in any existing contracts. Plus, following Brexit, the new SCCs are not recognised in the UK, so contracts governing transfers out of the UK will require the old SCCs until the UK’s Information Commissioner’s Office (ICO) releases its bespoke UK clauses, which are due in Summer 2021. You can read more about the new SCCs, here.

Guidance

Most data privacy laws are supplemented by formal guidance on how the law should be applied, to clarify subsequent ambiguity or even to close loopholes.

As a forerunner in data privacy, the GDPR arguably has the most notable library of guidance, covering topics as diverse as how to determine territorial scope, DPO conflicts of interest, and the use of cookies.

Does your DPO have a Conflict of Interest?

Your DPO should be knowledgeable, experienced and qualified, but not everyone is suitable for the role

Data Sharing Frameworks (States)

In contrast to the above, the laws that this element describes are less about how a company may or may not use personal data, but are specifically about whether and how they may share it.

Examples notably include California’s CPRA – the amended version of the CCPA – that was specifically created to regulate inter-company data sharing

Judgements

This is essentially case law – the legal principle that past legal decisions that applied statutory law to specific scenarios serve as an indicative (though not binding) precedent for future similar situations. Judgements may also publicly call into question the suitability of particular laws or mechanisms, and trigger significant change.

One of the most powerful recent judgements in data privacy was Schrems II which, as mentioned above, put SCCs at risk, resulting in their reconstruction.

Medical Data Laws

Rather than relying on generic data privacy laws, the way in which some nations intend or need to use patients’ healthcare data requires additional specific data privacy protection and protocols. The most well-known examples are the US’ HIIPA and HITECH.

ePrivacy Laws

A fast-growing area of legislation that is trying to keep up with balancing the desires and advanced capabilities of the advertiser with the privacy and interests of the data subject, e.g. CASL and the EU’s ePrivacy Directive (until the much-debated ePrivacy Regulation repeals it).

State Surveillance Laws

Seemingly the antithesis of data privacy, these laws articulate precisely when – and only when – an individual’s right to privacy may be overridden by national security and interest.

Examples include the UK’s Investigative Powers Act, and the US PATRIOT Act and CLOUD Act. Many of these also appear in the Periodic Table’s bottom section – Legislation and practices whose powers and requirements can conflict with data privacy.

Company Surveillance Laws

Companies and even individuals are required to preserve individuals’ rights to data privacy when performing any form of surveillance or recording, including telephone recording or the use of CCTV, even domestically.

Artificial Intelligence Laws

As recently as only three months ago, this may not have been a category. In April 2021, the EU published its AI Regulation. It creates obligations for the responsible use of AI, including the maintenance and oversight of data privacy.

On release, it was described as “the first-ever legal framework on AI” and it certainly won’t be the last regulation or law in this category.

Child Protection Laws

A category dedicated to those laws that protect the privacy and safety and children and their data (COPPA for example).

Whistleblowing Laws

These laws protect the privacy of those that expose corruption and crime, even in the face of judicial proceedings. These exist as both international laws, such as the African Union, and also nationally, with 59 countries having enacted their own.

Professional Codes of Conduct

The way in which some industries operate and the data they rely on and routinely process places additional obligations on their management of it. Industries such as financial services and gaming, and many others, have strict requirements around data management, responsibility and use – all of which directly or indirectly impact data privacy.

Standards and Certifications

There are many standards and certifications that attest to an organization’s determination to protect data privacy, whether in specific circumstances or industries (PCI:DSS for example, or the IAB Consent Framework), and also more sector-agnostically (ISO 27701). While voluntarily applied for and maintained, these standards often serve as bare minimum requirements for organizations’ supply chains, or membership of industry bodies.

Restructuring the Future Developments section

The degree of change over the last few months, plus the restructure of the Core Legislation section, meant that we also had to make substantial changes to the Future Developments section. Brexit is complete and the UK’s adequacy was confirmed so quickly, it simply drops into the new State Data Sharing frameworks element above. The ‘EU-US transfer mechanisms’ that we were tracking after Schrems II drop into Company Data Sharing frameworks. And of course, AI and its need for regulation to prevent conflict with data privacy is no longer a likely Future Development, and is well and truly here.

Some pre-existing Future Development elements remain, such as India’s privacy bill and the various US bills and enactments. Meanwhile, new concerns and debates have arisen, around the issues of public safety and identity.

United States Data Privacy Bills

Here we will track the various US state data privacy bills, and create new elements for bills that pass into law, keeping them in Future Developments until their effective dates. The two that we are tracking most closely at the moment are Washington State and New York.

Virginia Consumer Data Protection Act

In April 2021, Virginia passed its own privacy law. You can read more about our thoughts on the Virginia Consumer Data Protection Act here.

Colorado Privacy Act

And then in June 2021, Colorado was the third US state to pass its own data privacy bill – the Colorado Privacy Act. Between now and it coming into effect in July 2023, it seems there is the possibility of California-esque amendments and additions. There are already debates over its suitability, mainly regarding the fact consumers have no right to sue (similar to the new Virginia law), the nuances of the opt-out requirements, and the 17 ‘blanket exceptions’ that rule data collected by airlines, utilities, healthcare providers and various federal and state organizations out of scope.

Age Verification

There is increased demand among companies for better age verification processes. In many industries – especially online – the scrutiny, risk of penalties and risk of brand damage for mistakenly providing unsuitable services to minors is growing constantly as more and more Child Protection laws are enacted or proposed.

However, while companies want the ability to verify customers’ ages, they do not want the burden of doing it themselves, as this means knowingly processing more minors’ data to do so, creating additional data privacy obligations. It is a question that urgently requires solving, but also requires someone to take responsibility for it.

Identity Verification

The rise in the improper and abusive use of social media has flown up the news agenda since our last update. Celebrity complaints and even tragedies caused by anonymous social media users, plus concerns relating to terrorism, cybercrime and exploitation of children and women have all led to loud calls for users to be required to verify their identity before being able to use social media accounts.

However, such requirements may violate an individual’s right to privacy, or other related ethical concerns. Centralised identity verification would be a solution to this and Age Verification (above), and perhaps Vaccinations (below) – and many more live issues far beyond social media – but it is a solution riddled with moral, legal and practical difficulty. The debate however will not disappear, is likely to be frequently re-awoken, and data privacy principles and laws will be at the heart of it.

Vaccinations

The desire for governments and employers to understand who has been vaccinated from COVID-19 and who has not, versus the citizen/employee’s right to privacy will be an ongoing issue.

Similar to the issues employers notoriously found themselves in tracking employees who tested positive for the virus, and also similar to state surveillance laws above, the question is whether protecting wider public safety is sufficient justification to override the individual’s right to privacy. And if so, how that data is collected, treated and even shared will be an issue for months to come.

Back

Back